Using supercomputers to predict severe tornadoes and large hail storms

A team of scientists based at the University of Oklahoma (OU) is using supercomputers to learn and predict how the biggest, most severe tornadoes and large hail form.

When a hail storm moved through Fort Worth, Texas on May 5, 1995, it battered the highly populated area with hail up to 10 cm (4 inches) in diameter and struck a local outdoor festival known as the Fort Worth Mayfest. The cause for the hailstorm was a high precipitation supercell which developed ahead of and near the crescent of a fast moving bow echo.

At the time, the Mayfest storm was the costliest hailstorm in the history of US, causing more than $2 billion in damage and injuring at least 100 people. Flash flooding and lightning from the same storm killed at least 13 people.

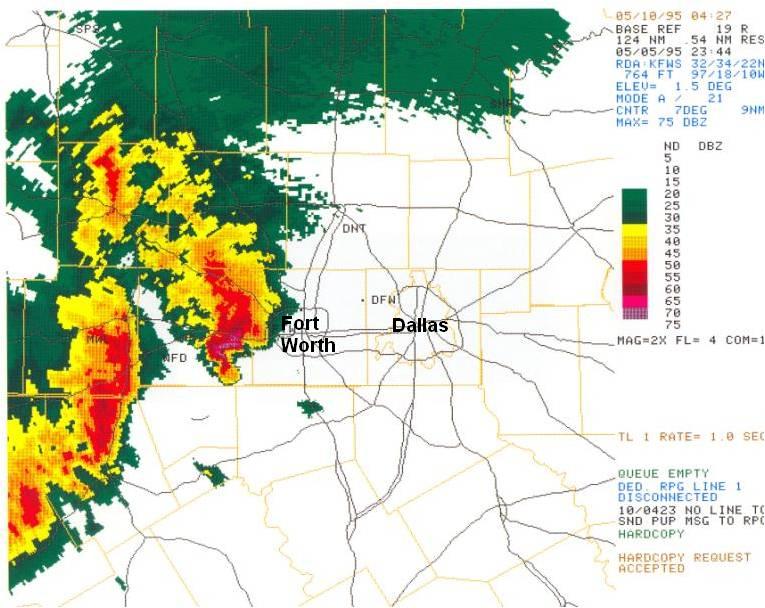

Radar imagery from 18:44 on May 5, 1995,shows the intense supercell west of Fort Worth moving east. Credit: NWS

Scientists know that storms with a rotating updraft on their southwestern sides, which are particularly common in the spring on the US southern plains, are associated with the biggest, most severe tornadoes and also produce a lot of large hail. However, clear ideas on how they form and how to predict these events in advance have proven elusive.

To solve that mystery, OU based scientists are working on the Severe Hail Analysis, Representation and Prediction (SHARP) project and performing experimental weather forecasts using the Stampede supercomputer at the Texas Advanced Computing Center. After three years of research, they have gained a better understanding of the conditions that cause severe hail to form and are now producing predictions with far greater accuracy than those currently used operationally.

SHARP is expected to advance the science of severe weather prediction, defining a paradigm linking data assimilation and data mining that could be applied to predict other convective-scale hazards such as downbursts and tornadoes. With sufficient computing resources, the techniques and algorithms developed in this project could be applied to support real-time severe weather warning operations, as envisioned in the NWS "Warn-on-Forecast" paradigm.

Broader Impacts of will include enhanced cross-disciplinary connections disciplinary and potential economic benefits to sectors including aviation that are especially vulnerable to hail. Results will aid in improving lead-time and user confidence in hail warnings, giving vulnerable industries an increased opportunity to mitigate damage and individuals additional time to seek shelter.

Improving model accuracy

To predict hail storms, or weather in general, scientists have developed mathematically based physics models of the atmosphere and the complex processes within, and computer codes that represent these physical processes on a grid consisting of millions of points. Numerical models in the form of computer codes are integrated forward in time starting from the observed current conditions to determine how a weather system will evolve and whether a serious storm will form.

Because of the wide range of spatial and temporal scales that numerical weather predictions must cover and the fast turnaround required, they are almost always run on powerful supercomputers. The finer the resolution of the grid used to simulate the phenomena, the more accurate the forecast; but the more accurate the forecast, the more computation required.

The highest-resolution National Weather Service's official forecasts have grid spacing of one point for every three kilometers. The model the Oklahoma team is using in the SHARP project, on the other hand, uses one grid point for every 500 meters, six times more resolved in the horizontal directions.

"This lets us simulate the storms with a lot higher accuracy," says Nathan Snook, an OU research scientist. "But the trade-off is, to do that, we need a lot of computing power, more than 100 times that of three-kilometer simulations. Which is why we need Stampede."

Stampede is one of the largest computing systems in the world for open science research. As an NSF petascale HPC acquisition, this system provides unprecedented computational capabilities to the national research community, enabling breakthrough science that has never before been possible. © TACC 2013.

Stampede is currently one of the most powerful supercomputers in the US for open science research and serves as an important part of NSF's portfolio of advanced cyberinfrastructure resources, enabling cutting-edge computational and data-intensive science and engineering research nationwide.

According to Snook, there's a major effort underway to move to a "warning on forecast" paradigm, that is, to use computer-model-based, short-term forecasts to predict what will happen over the next several hours and use those predictions to warn the public, as opposed to warning only when storms form and are observed.

"How do we get the models good enough that we can warn the public based on them?" Snook asks. "That's the ultimate goal of what we want to do, get to the point where we can make hail forecasts two hours in advance. 'A storm is likely to move into downtown Dallas, now is a good time to act.'"

With such a system in place, it might be possible to prevent injuries to vulnerable people, divert or move planes into hangers and protect cars and other property.

Studying the storms that produced the May 20, 2013 Oklahoma–Moore tornado that led to 24 deaths, destroyed 1 150 homes and resulted in an estimated $2 billion in damage, they developed zero to 90 minute hail forecasts that captured the storm's impact better than the National Weather Service forecasts produced at the time.

"The storms in the model move faster than the actual storms," Snook says. "But the model accurately predicted which three storms would produce strong hail and the path they would take."

The models required Stampede to solve multiple fluid dynamics equations at millions of grid points and also incorporate the physics of precipitation, turbulence, radiation from the Sun and energy changes from the ground. Moreover, the researchers had to simulate the storm multiple times – as an ensemble – to estimate and reduce the uncertainty in the data and in the physics of the weather phenomena themselves.

The potential of hail prediction

Though the ultimate impacts of the numerical experiments will take some time to realize, its potential motivates Snook and the severe hail prediction team.

"This has the potential to change the way people look at severe weather predictions," Snook says. "Five or 10 years down the road, when we have a system that can tell you that there's a severe hail storm coming hours in advance, and to be able to trust that – it will change how we see severe weather. Instead of running for shelter, you'll know there's a storm coming and can schedule your afternoon."

Ming Xue, the leader of the project and director of the Center for Analysis and Prediction of Storms (CAPS) at OU, gave a similar assessment.

"Given the promise shown by the research and the ever-increasing computing power, numerical prediction of hailstorms and warnings issued based on the model forecasts, with a couple of hours of lead time, may indeed be realized operationally in a not-too-distant future, and the forecasts will also be accompanied by information on how certain the forecasts are."

- The team published its results in the proceedings of the 20th Conference on Integrated Observing and Assimilation Systems for Atmosphere, Oceans and Land Surface (IOAS-AOLS); they will also be published in an upcoming issue of the American Meteorological Society journal Weather and Forecasting.

Source: NSF

Featured image: Great Bend Supercell by Lane Pearman (CC – Flickr)

Commenting rules and guidelines

We value the thoughts and opinions of our readers and welcome healthy discussions on our website. In order to maintain a respectful and positive community, we ask that all commenters follow these rules.